Echo analysis

Motivation

A friend of mine, Felix Hummel, showed me a fun project of his: He produced a high-pitch sound pulse, records the acoustic response, and draws conclusions on the distance to walls like in an echolot. I found this to be a simple, ingenious idea and wanted to reproduce this.

Implementation

The implementation is available in the echo-analysis git repository. It is split in basically two components:

-

echorec: A tool that plays some sound while simultaneously recording the acoustic feedback. It is written in C and based on PulseAudio Asynchronous API.

-

EchoAnalysis.ipynb: An ipython notebook that generates a pulse, uses echorec (i.e., the python wrapper in echorec.py) to play it and record the echo, and then does some signal processing using scipy.

For build instruction I refer to the README.

Demo

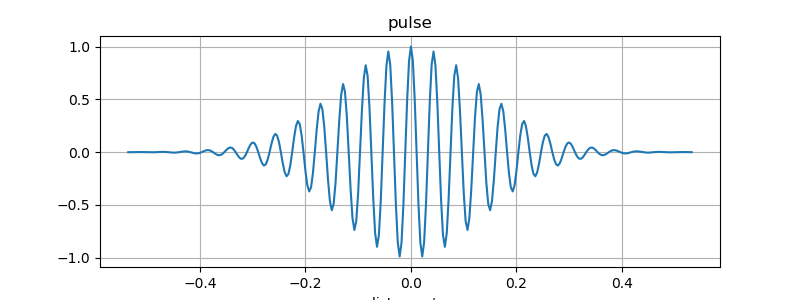

A 96 kHz sampled pulse of 8 kHz that is played by the speakers may look like this:

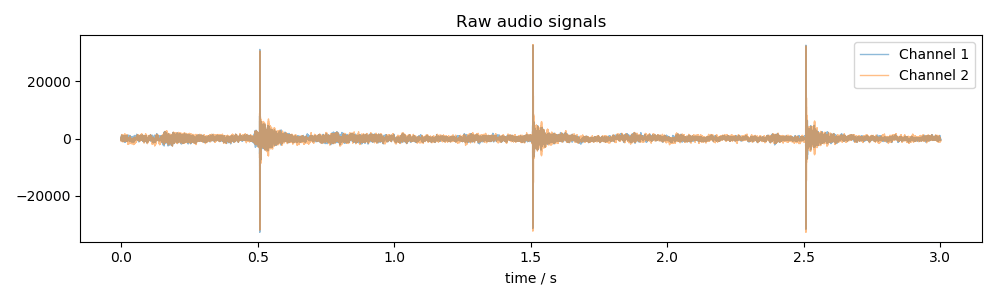

The raw audio signal captured by playing the pulse three times including some background sound then may look like this:

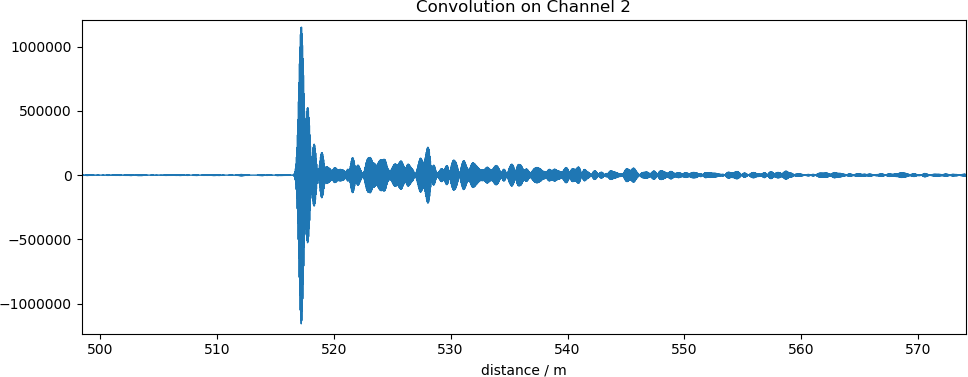

After band-pass filtering and computing a convolution with the pulse we obtain this:

The one response about 10m (time multiplied by speed of sound) after the initial peak may be the reflection of the walls of the room at distance of 5m in which I am right now.

The ipynb export gives all the details of the processing.